Regression Testing in Viabl.ai

Overview

The Regression testing feature in Viabl.ai enables the comparing of decisions generated from the execution of a new version of a knowledge base, against a set of pre-defined test cases, with the decisions stored from executing a previous version of the same knowledge base. Any differecnes are hightlighted in a Test report to enable the developer to assess the accuracy of the decisions made by new version of the knowledge base.

This features also allows the devloper to perform What-if scienarios to test the impact of different Decision models on the test cases

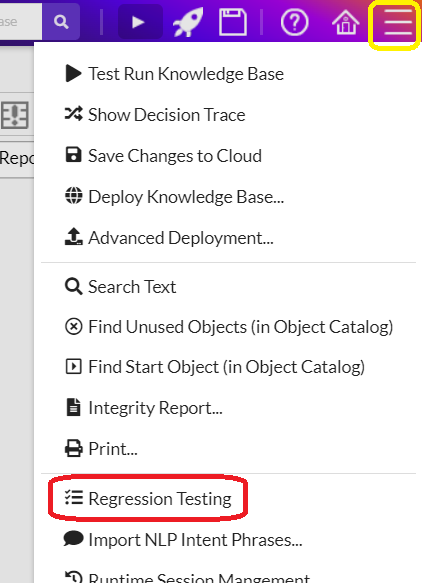

Accessing the Regression Testing Feature

Regression testing is accessed via the menu item in the main viabl.ai editor menu

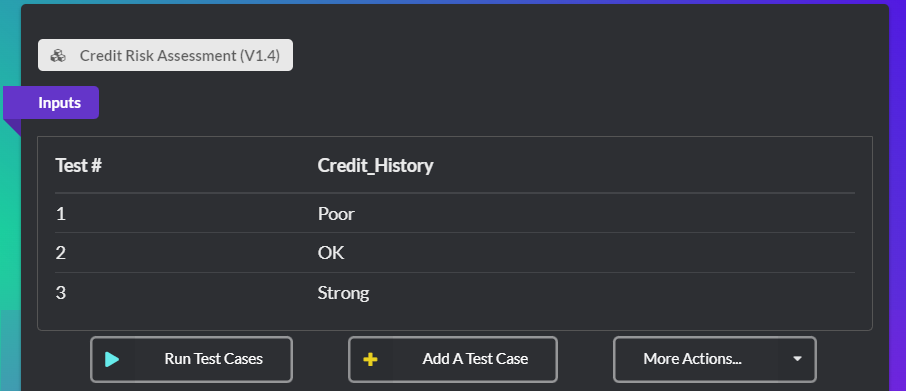

The Main Testing UI

The top section of the UI shows a limited number of stored test cases. These can be created either manually (via Add a test case button), or automatically (with the Auto-generate Test Cases menu under the More Actions menu).

The columns in the test cases represent the knowledge base inputs specified in the viable platform)

Once test cases have been setup, they can be performed with the Run Test Cases button.

The results of the test cases are displayed at the bottom of the UI. By default, cases which match the tests are not shown in the report. To see the results of all the runs, check the Show all test cases option.

External Reporting

The full test report can be downloaded (in CSV format) to allow for more bespoke reporting to be generated (e.g. via Microsoft Excel). The results can be downloaded via the Download results menu item under the results' More Actions menu.

Testset Creation

To jump-start the regression testing process an initial test set of cases can be automatically (randomly) generated from the required input parameters. Further test cases can then be added manually or via the import feature.